Ground Truth

On ships, valves, and the quiet places where reality overrules our plans.

My grandfather’s workshop was where machines came to confess.

They arrived half-broken from all over the country, heavy and silent on the back of trucks. Old milling machines from Sweden, presses from Canada, things with brass plates stamped in languages he couldn’t read. What never arrived with them were spare parts or manuals.

When a machine died, nobody brought drawings. They brought a story: “It used to run like this. Then it started making this noise. Then it stopped.”

My grandfather always started there. He listened to the story. Then he listened to the machine.

“For him, the design in the filing cabinet, wherever it was, didn’t define the machine. The machine defined the design.”

He would run it for a second, just long enough to hear the wrong rhythm in the gears or feel the wrong vibration in the frame. Only then would he open it up. A cracked shaft. A missing tooth in a gear. A bearing welded to its housing by heat. Somewhere inside, the official design had met dust and metal fatigue and real life — and lost.

In the country where that machine was born, the cure lived on paper: you looked up a part number and ordered the replacement that matched the drawing.

In his world, the drawing might as well not exist. The only “blueprint” available was the way the machine behaved when you turned it on.

So he worked backwards. Measure the gap where a tooth used to be. Watch how two gears mesh. Listen for the knock when they don’t. From that, he turned a piece of anonymous steel on the lathe into a new part and slid it into place. If the machine ran smoothly, the part was “correct.” If not, the part was wrong.

For him, the design in the filing cabinet, wherever it was, didn’t define the machine. The machine defined the design.

When the panel lies

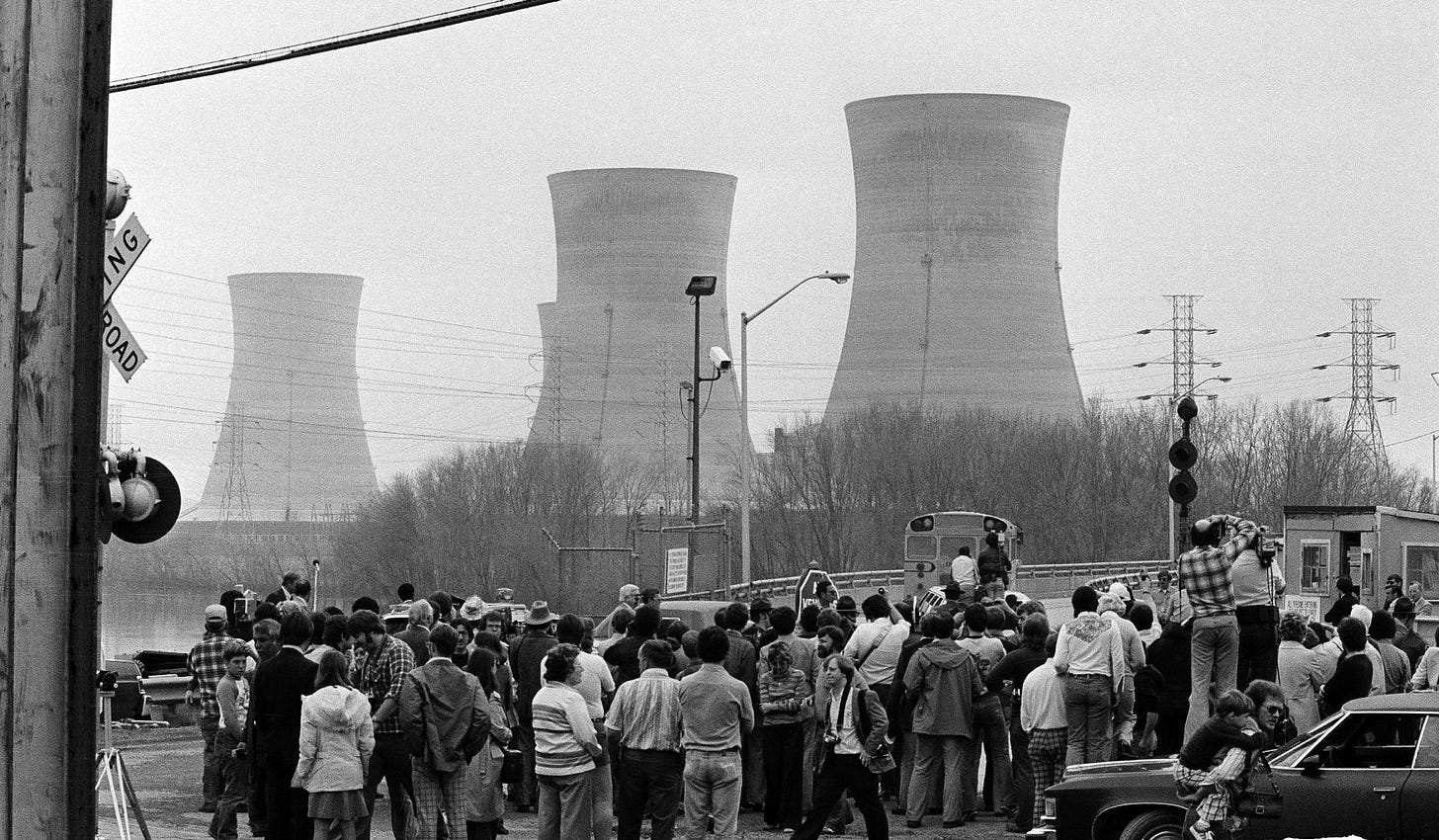

Thirty years later and a continent away, a nuclear power plant in Pennsylvania tried to do the opposite of what my grandfather did. It tried to make the design more real than the machine.

In the control room at Three Mile Island, the “machine” was mostly invisible. The reactor, the pipes, the valves — all of that lived behind concrete and steel. What the operators could see was a wall of panels: gauges, switches, and rows of small indicator lights.

One of those lights was supposed to tell them whether a relief valve in the reactor’s cooling system was open or closed.

Early one morning in 1979, that valve opened to release pressure and then failed to close again. Water that should have stayed in the system started escaping. The core began to overheat.

On the panel, the light went out.

In the language of the control room, a dark light meant “valve closed.” The descriptive truth, the one written into procedures and training and labels, was simple: light on, valve open; light off, valve closed.

The actual wiring was a little different. The light didn’t report the position of the valve. It reported the position of the switch that controlled the valve. If the operators sent a “close” command, the light turned off — even if the valve jammed halfway and stayed physically open.

So the panel said “closed” while the valve leaked.

Faced with ambiguous alarms and a blizzard of signals, the operators trusted the panel. They reasoned from the description they had: the light is off, so the valve is closed, so the problem must be something else. In trying to fix that “something else,” they made the situation worse.

Afterward, one of the outside experts brought in to study the human side of the accident was Don Norman. His conclusion was uncomfortably close to what my grandfather knew in his bones: the control room treated labels as more authoritative than behavior. The interface didn’t describe the machine; it told a comforting story about it.

The ground truth was in the stuck metal. The people in the room were arguing with the pipes using grammar and little lights.

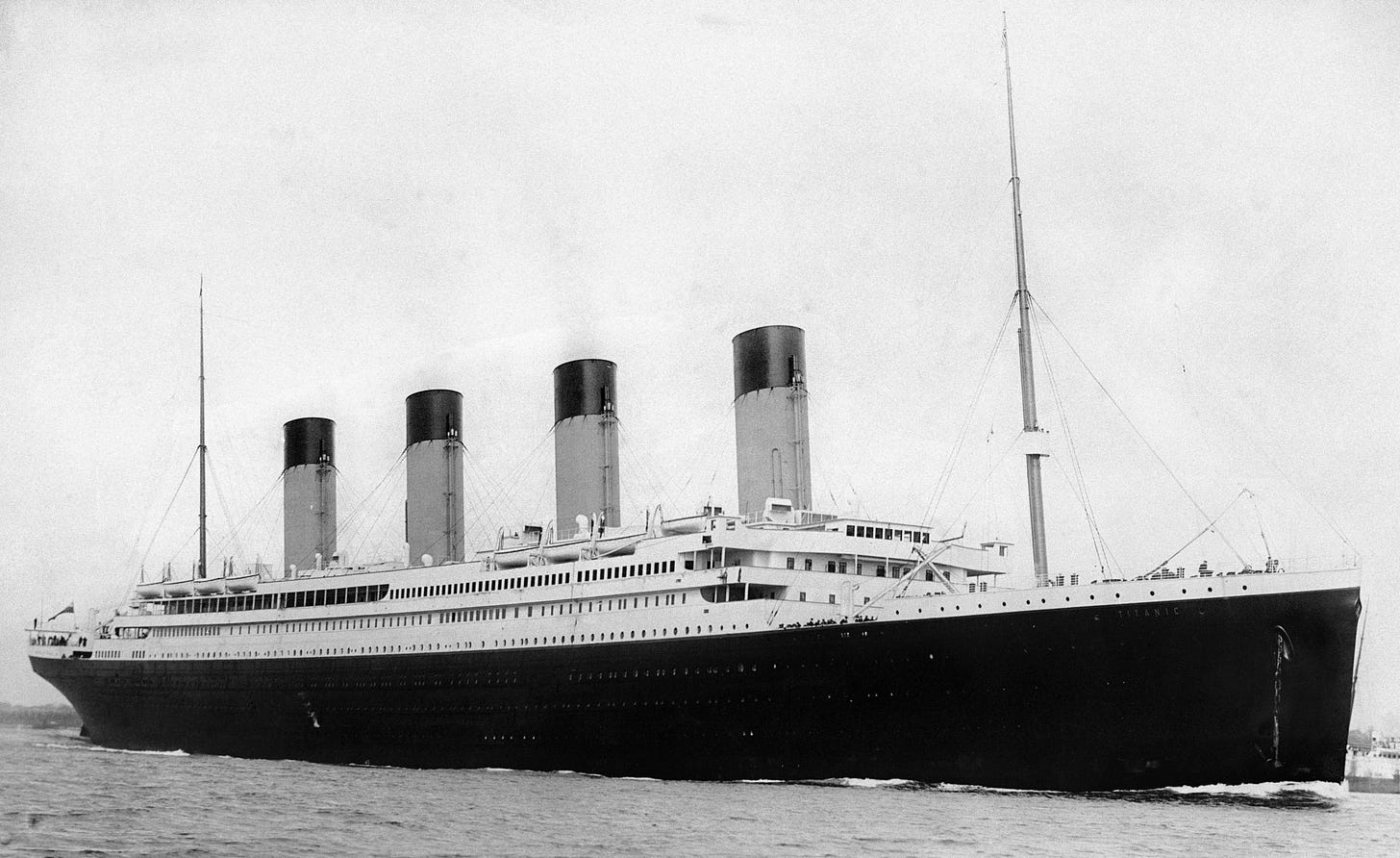

The unsinkable blueprint

If my grandfather’s workshop was a private lesson and Three Mile Island a technical one, the Titanic was the same mistake performed on the world’s biggest stage.

On paper, Titanic really was a marvel. The watertight compartments, the double bottom, the calculation that the ship could stay afloat with four of its forward compartments flooded — all of that came from serious engineering. Brochures and journalists compressed those details into a single, sticky phrase: “practically unsinkable.”

The phrase changed how people behaved.

It made sense not to clutter the decks with lifeboats: why prepare for a scenario your own language says is impossible? It made sense to steam fast through ice fields: delay felt more real than disaster. The descriptive truth — this ship does not sink — was so strong that the North Atlantic started to look like a technical detail.

On the night of April 14, 1912, the ocean declined to cooperate.

The iceberg tore open more compartments than the designers had allowed for. The watertight bulkheads stopped short of the main deck, so water sloshed from one to the next like an ice-cube tray. The ship did exactly what a steel box full of water does under gravity.

From the design’s point of view, it was a freak, one-compartment-too-many event. From the sea’s point of view, it was just another object that didn’t float anymore.

The interesting part, if you’re thinking about systems, isn’t that people were arrogant. It’s how quickly “practically unsinkable” became a kind of operating system for decision-making. Officers, passengers, even regulators behaved as if the brochure were ground truth and the ocean were negotiable.

“Reality never signs off any of our blueprint.”

In my grandfather’s shop, in the control room, on the North Atlantic, the same pattern shows up in different clothes.

We keep writing beautiful descriptions of how things are supposed to work — blueprints, panels, marketing lines, product specifications — and then reality points out, sometimes gently and sometimes with sirens, that it never signed off on any of them.

The machine, the reactor, the ship don’t care what’s on the page. They only “speak” in behavior. If there’s a source of truth in any physical system, that’s where it lives.

Everything else is a story we tell until we test it.

Three kinds of truth

Once you see this, it becomes hard not to sort the world into three kinds of truth:

Ground truth: what the system is actually doing—the machine on the factory floor, the valve deep in the pipe, the water in the hull.

Recorded truth: what our instruments say is happening—the indicator light, the sensor reading, the status screen.

Descriptive truth: what we say is true—the blueprint, the manual, the marketing phrase “unsinkable.”

In my grandfather’s shop, ground truth was the sound of the rollers when the machine finally ran smoothly. At Three Mile Island, it was the temperature of the core, not the state of a little lamp. On the Titanic, it was the angle of the deck under your feet.

Most of the time, these three line up well enough that we don’t notice the distinction. The machine roughly matches the drawing; the light usually tells the truth; the brochure is only a little optimistic.

The interesting stories begin when they drift apart.

In my grandfather’s world, recorded truth was thin. There were no dials, no digital logs. He went straight from descriptive truth (“this is a flour mill”) to ground truth (the way the rollers sounded at full speed). At Three Mile Island, the operators were trapped in recorded truth: a room full of symbols that, in one crucial case, lied. On the Titanic, descriptive truth—“practically unsinkable”—was strong enough to keep people calm on a freezing deck.

These are not just engineering curiosities. They are examples of a very human habit: once we have a good description of something, we start to trust the description more than the thing.

Specifications, promises, and what really happens

Today, we build far more with words than with steel.

We write specifications for products. We draft strategy documents. We ask AI systems to generate plans, summaries, even “requirements” for how other AI systems should behave. All of that lives in the realm of descriptive truth: our best story, at this moment, about what we hope will happen.

There is nothing wrong with that. The danger comes when we forget that it is only the first layer. A slide that says “this change will be simple” is not the same as a change that turns out to be simple. A document that says “this system will be safe” is not the same as a system that has actually failed safely, in the wild, many times.

My grandfather did not have a philosophy for this. He had a habit. Before he trusted any story about a machine, he plugged it in and watched it move.

That habit may be the most modern thing about him.